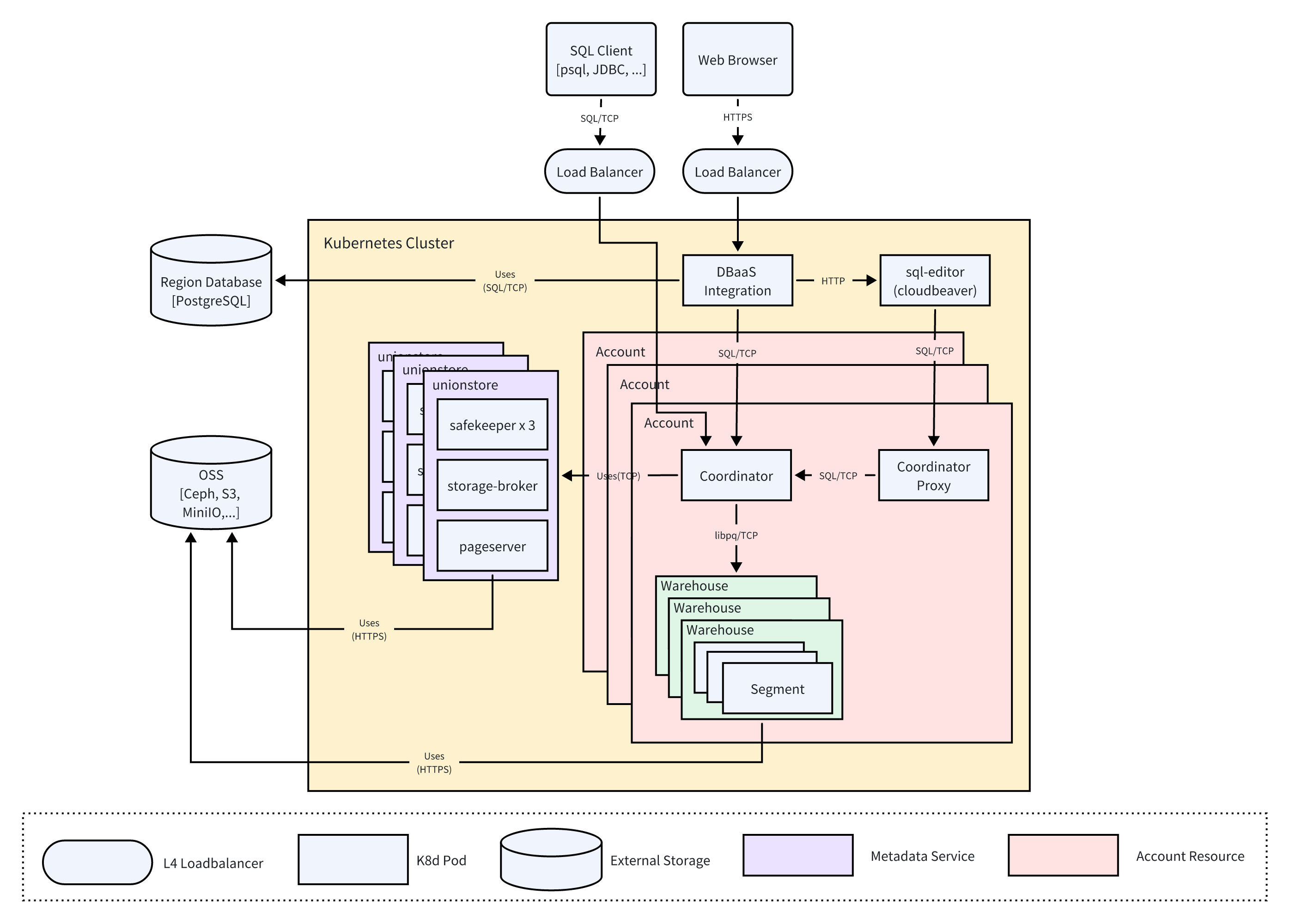

System Deployment Architecture

This document explains the physical deployment topology, component distribution, network access patterns, and multi-tenant resource isolation model for the SynxDB Cloud cloud-native data warehouse in a Kubernetes environment.

SynxDB Cloud is fully deployed within a Kubernetes cluster and relies on external high-availability storage services. Its deployment architecture follows cloud-native design principles, with all internal services running as Pods, achieving a high degree of automation, elasticity, and portability.

The deployment architecture has the following features:

Multi-tenant isolation: Achieves compute and metadata isolation between tenants through account resources.

Layered deployment: Logically separates platform management, metadata services, and tenant computing resources into distinct layers with clear responsibilities.

Unified entry point: Provides a unified, highly available service entry point for different types of access (SQL clients and web browsers) through L4 load balancers.

Externalized storage: Critical stateful data (region metadata and primary business data) is stored in specialized storage services outside the cluster, ensuring data persistence and reliability.

The following sections explain the deployment environment and boundaries, and core component deployment, as shown in the diagram above.

Deployment environment and boundaries

External dependencies

The system relies on the following external storage services to operate:

Region Database (Postgres): An independent PostgreSQL database instance used to store platform-level metadata (for example, tenant information, user permissions). The DBaaS Integration service communicates with it via the

SQL/TCPprotocol.OSS (Object Storage Service): An object storage service (for example, Ceph and AWS S3) that serves as the underlying data lake for the data warehouse, persistently storing all business data for tenants.

Network entry points

All external traffic to the system is routed through two dedicated L4 load balancers:

HTTPS Load Balancer: Receives

HTTPStraffic from web browsers and forwards it to the backendDBaaS Integrationservice, providing access to the user console and the operations management console.SQL/TCP Load Balancer: One per tenant (Account), receives

SQL/TCPtraffic from SQL clients (for example, psql, JDBC) and forwards it to the tenant’s Coordinator service for database queries and operations.

Core component deployment

All application components are deployed as Pods within the Kubernetes cluster and are organized into the following logical service units based on their functionality:

Platform management services

This is the control plane for platform administrators, and it is independent of the tenant data plane.

DBaaS Integration: The core Pod for the platform’s backend management service. It processes management requests from web browsers and interacts with the external region database to manage platform metadata.

sql-editor (cloudbeaver): A frontend service Pod for the web-based SQL editor. It provides users with a graphical interface and communicates with

DBaaS IntegrationviaHTTPto proxy user SQL requests.

Metadata service

This is a dedicated cluster responsible for managing compute metadata for tenants (accounts), such as table structures, view definitions, and statistics.

High availability and scalability: Metadata is persisted to FoundationDB, leveraging its high availability and horizontal scalability to support ultra-large-scale compute clusters.

High-performance access: The metadata service supports multi-node deployment to enhance its service capabilities, enabling a “one-write, multiple-reads” model. It also allows concurrent read and write access from multiple tenant Coordinator nodes, ensuring efficient metadata interaction.

Metadata and storage services (UnionStore)

This is a logical group of collaborating Pods that form the system’s distributed storage engine. It is shared by all tenants and is responsible for managing the data lifecycle between the compute layer and OSS.

pageserver: The core Pod responsible for managing data page caching and data exchange with OSS.

storage-broker: A proxy Pod that facilitates communication between the compute layer and the storage engine.

safekeeper: A high-availability cluster consisting of three instance Pods. It is responsible for synchronously receiving and persisting transaction logs (WAL) to ensure transaction durability.

Tenant resources (Account)

This is a logical resource unit that is central to achieving multi-tenant isolation in the system. Each Account represents an independent tenant environment with its own dedicated computing resources and metadata. The system can run multiple Account instances simultaneously.

Each Account resource unit contains the following dedicated Pods:

Coordinator: A tenant-specific query coordinator Pod that serves as the entry point for all SQL requests for that tenant.

Coordinator Proxy: A tenant-specific query proxy Pod that primarily handles connection requests from the

sql-editor.Warehouse (compute cluster): One or more pools of computing resources. Each

Warehouseconsists of multipleSegmentPods that can directly access OSS. AWarehouseis elastically scalable, allowing tenants to dynamically adjust the number ofSegmentPods based on their workload demands.